The Limits of Computer Vision, and of Our Own

In fields such as radiology, AI models can help compensate for the shortcomings of human vision but have weaknesses of their own

- 13 min read

- Feature

Have you ever locked eyes with a horseshoe crab? You might have without realizing it — with ten eyes scattered across their outer shell, their peepers look nothing like our own. Chameleons have only two eyes but can move them independently, allowing the lizards to shift between binocular and monocular vision. And bees can see ultraviolet light, which helps them find nectar.

All of these vision systems share the same goal: to filter an infinitely nuanced reality into useful bits of information. But they go about it differently, offering a reminder that “there isn’t one unique solution to vision,” says Gabriel Kreiman, an HMS professor of ophthalmology at Boston Children’s Hospital.

Kreiman often reflects on the diversity found in the natural world as he fine-tunes another approach to sight: computer vision using artificial intelligence. “You can think of AI like a new species, sometimes with novel and creative solutions to vision problems,” he says.

AI tools can now drive cars, recognize faces, and inspect medical scans, and by some measures they can outperform humans. But as more and more visual tasks are being outsourced to computers, Kreiman and other researchers are working to identify AI’s blind spots, examining how much we can trust computer vision — and how it stacks up against our own.

Gorillas in the midst

“We’re convinced that when we open our eyes and look, we are seeing truth with a capital T,” says Jeremy Wolfe, HMS professor of ophthalmology and professor of radiology and director of the Visual Attention Lab at Brigham and Women’s Hospital. “But we are seeing through a visual system and a cognitive system that has some very profound limits to it.”

A classic example of those limits involved a 1999 Harvard experiment with a gorilla — or, more accurately, a person in a gorilla suit. Study participants watched a video of a group tossing basketballs, counting how many times the players in white shirts passed a ball. After about 30 seconds, a person in a gorilla suit strolled across the screen. Later, the participants were asked whether they’d noticed the gorilla. Half of them were completely oblivious to it. The reason relates to attention: While focusing on one thing, we tend to miss other things right in front of our eyes.

Example 1: What’s behind the curtain?

You probably know that you saw some plus signs and some X’s, and that the colors were red, green, blue, and yellow. But were the vertical bits of the red-green plus signs red or green? You’re most likely unsure, unless you go back to check. Wolfe says that’s because “binding” the colors to their orientations requires selective attention. The visual system has two pathways. One is nonselective — it is able to appreciate the colors, orientations, and layout of the scene, but it can’t read, recognize a face, or parse which lines of a plus sign are green. The other pathway is selective — it recognizes the details. “You need the selective pathway to bind things together,” says Wolfe, “but that pathway can check only one or two items at a time.”

Wolfe has spent his career exploring the factors that conspire to sway our attention from the obvious, including our expectations, distracters, and how unusual an object is. Errors are “absolutely ubiquitous in human behavior,” Wolfe says, and they often seem glaringly obvious in retrospect.

Wolfe is particularly interested in how these forces affect people who are trained to inspect images. In one study, Wolfe and colleagues asked radiologists to count cancerous nodules in five sets of CT scans, each consisting of a series of individual images of lungs. What the researchers didn’t share, however, was that in the final set they had pasted an image of a gorilla onto the lung scans. Remarkably, 83 percent of the radiologists missed the gorilla, even when eye-tracking software showed that their eyes had landed on it and even though it was about 48 times larger than the nodules they were searching for.

This “inattentional blindness” is completely normal, but the consequences can be serious, sometimes leading to overlooked diagnoses and harm to patients. Studies suggest that error rates in radiology, which hover around 4 percent for all images, and up to 30 percent among abnormal images, have remained relatively unchanged since the 1940s. Medical imaging is therefore among the fields in which computer vision is touted as a way to decrease error rates by picking up on the clues that humans miss — or even someday doing the job solo.

Example 2: Can you spot the difference?

If it took a while to catch the disappearing chicken, that’s because of “change blindness.” Humans can easily miss differences if we aren’t paying attention to the right object during a quick eye movement, camera cut, or other distraction.

This matters in a field like radiology, where physicians often compare a patient’s previous and current medical scans side by side, looking back and forth to see whether innocuous features have evolved into something more menacing. Wolfe and colleagues have used image pairs like this to test whether alternating between images on top of one another instead of side by side can improve the likelihood that radiologists will spot a change. They’ve found that alternating between images does help. And, as illustrated above, changes are easier to spot if the angle of the two images remains static. Real-life medical images tend to have slight shifts in angle from year to year, but research indicates that minimizing those shifts could improve detection of changes.

How computers see

Before computers could be tasked with reviewing medical scans, they needed to be trained to see. In 1966, scientists at MIT put out a call for undergraduates to take on, as a summer project, the development of a computer program that could identify objects. “Needless to say, they quickly realized that the problem was harder than they expected,” says Kreiman. It became a decades-long challenge: How could a computer mimic a natural process shaped by millions of years of evolution?

“The problem of vision is how to turn patterns of light into a representation of what is out there,” says Talia Konkle, professor of psychology at Harvard. When humans see, photons bounce off the surfaces around us, hitting the back of our eyeballs. That input turns into neural activity that helps us understand what’s in front of us. In that sense, Konkle says, the goal of computer vision is similar, “to take light captured by a camera as images or videos, which are essentially just numbers, and then process those into representations that can do similar tasks,” such as recognizing objects.

Early approaches to computer vision required a painstaking process that Konkle calls hand-designing features — for example, building an algorithm to detect cars by training it to look for circles or contours in certain areas.

Over time, this approach was replaced by deep learning, which relies on an architecture with striking similarities to the human visual system. The smallest building blocks are computational units inspired by neurons in the brain. Like neurons, they take in inputs and add them up; if the result passes a certain threshold, they fire.

AI models called convolutional neural networks — now the most common model for computer vision — take networks of these building blocks to an enormous scale, organizing them in hierarchical layers. Like the layers of neurons in our brains, they’re each responsible for processing small, overlapping regions of an image, detecting features like shapes and textures. These so-called convolutional layers are interspersed with layers that generalize information so the computer can combine simple features into increasingly complex ones, as well as with layers that process the information to complete a given task.

Using this approach, computers can learn from labeled images how to recognize a car or any other object. Through trial, error, and self-correction, the computer can start to associate specific features with cars, transforming that knowledge into mathematical equations that then help it recognize cars in images it hasn’t seen before. Similarly, feed a model thousands of labeled medical images, and the network will learn to recognize the patterns that indicate abnormalities. It can then draw from what it’s learned to interpret new scans.

Thanks to the vast quantities of digital images available to train on, as well as advances in computing power, these models have greatly improved in recent years, giving them “really interesting properties,” says Konkle, who is among a growing number of vision scientists now using deep neural networks to learn more about how humans see.

“The level of complexity of the biological system so far overshadows these models; it’s not even funny,” Konkle adds. “But as information processing systems, there are deep principles about how they solve the problem of handling the rich structure of visual input that really seem to be like how the human visual system is solving the problem, too.”

Predictive vision

Michael T. Lu, MD ’07, an HMS associate professor of radiology at Massachusetts General Hospital, is taking advantage of the strengths of computer vision to spot potential health problems that would typically go unnoticed. Along with assistant professor Vineet Raghu, an assistant professor of radiology at Mass General, Lu developed a computer vision model called CXR Lung-Risk, which was trained using chest X-rays from more than 40,000 asymptomatic participants in a lung cancer screening trial. Testing the model on chest X-rays from individuals it hadn’t seen, the researchers found that CXR Lung-Risk could identify people at high risk of developing lung cancer years into the future with impressive accuracy, beyond the current lung cancer screening guidelines used by Medicare.

Lu and colleagues have used similar models to identify individuals at higher risk of dying from other lung-related diseases and detect cancer warning signs among people who have never smoked. About 10 to 20 percent of lung cancers occur among never smokers, and while incidence is on the rise in this group, these individuals aren’t typically screened, resulting in cancers that tend to be more advanced once detected. But these AI tools could potentially catch dire warning signs in basic tests commonly available in patients’ medical records — signs that humans aren’t usually looking for.

The findings hint at the tantalizing possibility that AI can see into the future. Lu, however, is skeptical. “Some people have described it as superhuman,” he says. “But I don’t know if that’s really true. There’s nothing on the images that a human couldn’t see.”

Lu thinks a trained radiologist would probably perform just as well on this task as the AI models he has tested, and some research does support that claim. In one study, Wolfe and colleagues displayed a series of mammogram images, each for a half second, and asked mammographers if they sensed anything that warranted further examination. Half the scans had been acquired from cancer patients three years before any signs of confirmed cancer appeared, while the other half came from women who did not develop cancer. The experts could distinguish normal from abnormal images at above-chance levels, despite the fact that there were not yet any visible signs of cancer.

Example 3: Try to spot the chimpanzee

You may not have noticed every detail in the fast-moving images above, but chances are you caught the chimpanzee. It turns out that even if focusing on specific objects requires selective attention, humans are pretty good at quickly getting the overall gist of images in a fraction of a second. Similarly, trained radiologists are good at catching the gist of medical scans very quickly.

But even though human radiologists can spot such discrepancies, most are focused on making a diagnosis rather than a long-term prognosis. And AI provides an advantage in scale. Training a radiologist for years is expensive and time-consuming. Meanwhile, the sheer quantity of radiological image data has surpassed the number of experts available to interpret it, increasing their workloads.

One study found, for example, that radiologists would need to interpret an image every three to four seconds to keep up. Once an AI model is trained, it can be used over and over again in many hospitals at a low cost. And models like Lu’s could potentially be deployed within electronic medical records as a kind of opportunistic screening, to flag existing scans for abnormalities that might otherwise go unnoticed.

Adding context to AI

Despite these advantages, there are good reasons to move cautiously in deploying vision models in radiology and other fields. Kreiman spends a lot of time thinking about computer vision’s shortcomings as he works to build better models. Recently, this has involved teaching models to lighten up — to understand when images are funny. It’s not easy. “Humor is about more than extracting visual features,” he says. “It requires understanding human intentions.”

Example 4: Understanding context

A team of HMS researchers asked three AI vision models to interpret this image. Instead of seeing a giant chair, the models were way off: one thought it was a guillotine, another saw a turnstile, and the third saw a forklift. That’s because some models are easily tricked when an object appears in an unfamiliar context. The team thus proposed a new AI model that would tackle this shortcoming. Drawing from the neuroscience of human vision to incorporate both the selective and nonselective paths of vision described in example 1, the model aimed to help the computer better integrate both the object of focus and its surroundings, much like humans do.

Not all AI models need to crack jokes, but a missing sense of humor illustrates an important shortcoming of many computer vision models: the inability to understand context, which involves weighing the relative importance of objects within their surroundings. For more advanced interpretation, understanding context also involves deciphering relationships, interpreting what could happen before or after — things humans do effortlessly thanks to real-world experience.

Another concern is that even if a model seems accurate, it is not always clear what it’s seeing. An early computer vision model to identify pneumonia was trained on images from two patient populations: one from an emergency department and one from an outpatient clinic. The model seemed pretty good at predicting pneumonia — until researchers realized it hadn’t actually been looking for pneumonia in the scans. Rather, it was identifying the type of marker that technicians had used to label the left side of the images in the ED, where patients were more likely to have pneumonia.

“AI models can be brittle — they can fail in populations different from the ones they’ve been trained on,” says Lu. “So it’s important to validate them in completely different datasets from different populations.” This step is essential to reduce algorithmic bias. If images used for training don’t reflect the socioeconomic, demographic, and geographic makeup of the populations a model will be used for, their efficacy can plummet in a new context.

AI models can also magnify existing biases in the medical records. A 2021 study from a team at MIT, for example, found that chest X-ray images from Black patients, female patients, Hispanic patients, and patients on Medicaid were more likely to be underdiagnosed by an AI model reviewing them. The researchers suggested these differences stemmed from human-caused biases in the medical records used to train the AI. But they also warned that the algorithms can exacerbate those biases, compounded by a lack of data and small sample sizes in underserved groups that might have fewer interactions with the health care system.

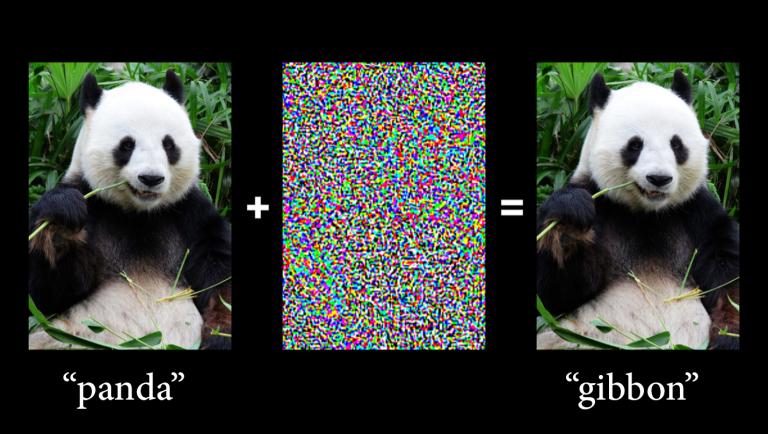

Example 5: Adversarial attacks

The fragility of computer vision models is especially clear in the case of “adversarial attacks,” in which malicious actors add small changes to an image, often invisible to human eyes, that completely alter a model’s reading. These perturbations are like optical illusions for AI — slight modifications to pixel values or the addition of patterns or textures that cause misclassification.

Take the panda above, inspired by an example from a classic 2014 study by researchers at Google. What seemed like random noise was actually carefully calculated, based on patterns that occurred more frequently in gibbon photos than in panda photos during the AI model’s training. When that noise was added to the panda image, the computer saw patterns that it had learned to link with a gibbon and reclassified the image confidently.

In a 2019 paper in Science, a team of researchers from HMS, Harvard Law School, and MIT showed how these types of attacks could threaten the integrity of medical imaging. They added adversarial noise to an image of a benign mole, causing an AI model to reclassify the mole as malignant with 100 percent confidence.

Visions of the future

“At the moment, what you’ve got is a good computer and a good human,” says Wolfe. The obvious solution would seem to be to have humans use AI to improve the performance of each. But it’s not that simple. One recent study by HMS researchers showed that some radiologists’ performance actually worsened when they used AI.

“This question of how to get humans and their machines to work together optimally is a fascinating problem,” says Wolfe, pondering the potential reasons that the sum of a human and a computer is not always greater than the parts. If the AI model tends to make mistakes, physicians may be less willing to trust it. On the other hand, if it’s too reliable, physicians may lose the skill required to step in when it fails. (Think of the state of your map-reading skills after years of relying on GPS.)

As for removing humans from the equation, Wolfe says, “computer vision is getting better all the time, but it’s not perfect, and nobody is willing to just let the computer do the task.” Still, he muses, “If you think about it, once upon a time, there must have been somebody who was counting red blood cells to get your red blood cell count, and now you’re perfectly happy to hand that over to whatever machine is doing it. Maybe that’ll be the case for breast cancer screening one day.”

Kreiman, who sees most of the current limits to computer vision as temporary, echoes these thoughts. “Many children today are amazed that some of us did arithmetic by hand instead of using calculators, or navigated using physical maps instead of digital ones,” he says. “Children in the future may be surprised that we once used human vision for clinical diagnosis.”

Molly McDonough is the associate editor of Harvard Medicine magazine.

Images: Mattias Paludi (trees); courtesy of Jeremy Wolfe (video animations); Qqqqqq at English Wikipedia (chair); leungchopan/iStock/Getty Images Plus (panda)